-

Advertising Analytics

Advertising Analytics

Audience Measurement

OTT Services

Internet Protocol TV

Return Path Data

Viewer Analytics

Gross Rating Points

Digital Audience Measurement

Total Audience Measurement

Copy Testing

Testing Advertising Online

Advertising Tracking

Continuous vs. Dipstick

Tracking Questionnaire

Advertising Engagement

Behavioural Engagement

YouTube Analytics

Attitudinal Engagement

Branded Memorability

Persuasion

Uniqueness

Likeability

Image and Symbolism

Involvement

Communication

Emotion

Case — Molly LFHC

Awareness Index Model

- How Advertising Works

- Advertising Analytics

- Packaging

- Biometrics

- Marketing Education

- Is Marketing Education Fluffy and Weak?

- How to Choose the Right Marketing Simulator

- Self-Learners: Experiential Learning to Adapt to the New Age of Marketing

- Negotiation Skills Training for Retailers, Marketers, Trade Marketers and Category Managers

- Simulators becoming essential Training Platforms

- What they SHOULD TEACH at Business Schools

- Experiential Learning through Marketing Simulators

-

MarketingMind

Advertising Analytics

Advertising Analytics

Audience Measurement

OTT Services

Internet Protocol TV

Return Path Data

Viewer Analytics

Gross Rating Points

Digital Audience Measurement

Total Audience Measurement

Copy Testing

Testing Advertising Online

Advertising Tracking

Continuous vs. Dipstick

Tracking Questionnaire

Advertising Engagement

Behavioural Engagement

YouTube Analytics

Attitudinal Engagement

Branded Memorability

Persuasion

Uniqueness

Likeability

Image and Symbolism

Involvement

Communication

Emotion

Case — Molly LFHC

Awareness Index Model

- How Advertising Works

- Advertising Analytics

- Packaging

- Biometrics

- Marketing Education

- Is Marketing Education Fluffy and Weak?

- How to Choose the Right Marketing Simulator

- Self-Learners: Experiential Learning to Adapt to the New Age of Marketing

- Negotiation Skills Training for Retailers, Marketers, Trade Marketers and Category Managers

- Simulators becoming essential Training Platforms

- What they SHOULD TEACH at Business Schools

- Experiential Learning through Marketing Simulators

Emotion

Verbal responses often fail to elicit the true nature of emotions. Consumers find it hard to verbalize emotions, and they may not even be conscious of their existence. Moreover, some emotions are personal and perhaps embarrassing to express aloud. There are also subjectivities in languages and cultures. In some countries for instance, people are less willing to express negative opinions. For these reasons, the focus on emotion research is shifting to indirect interviewing methods and non-verbal approaches.

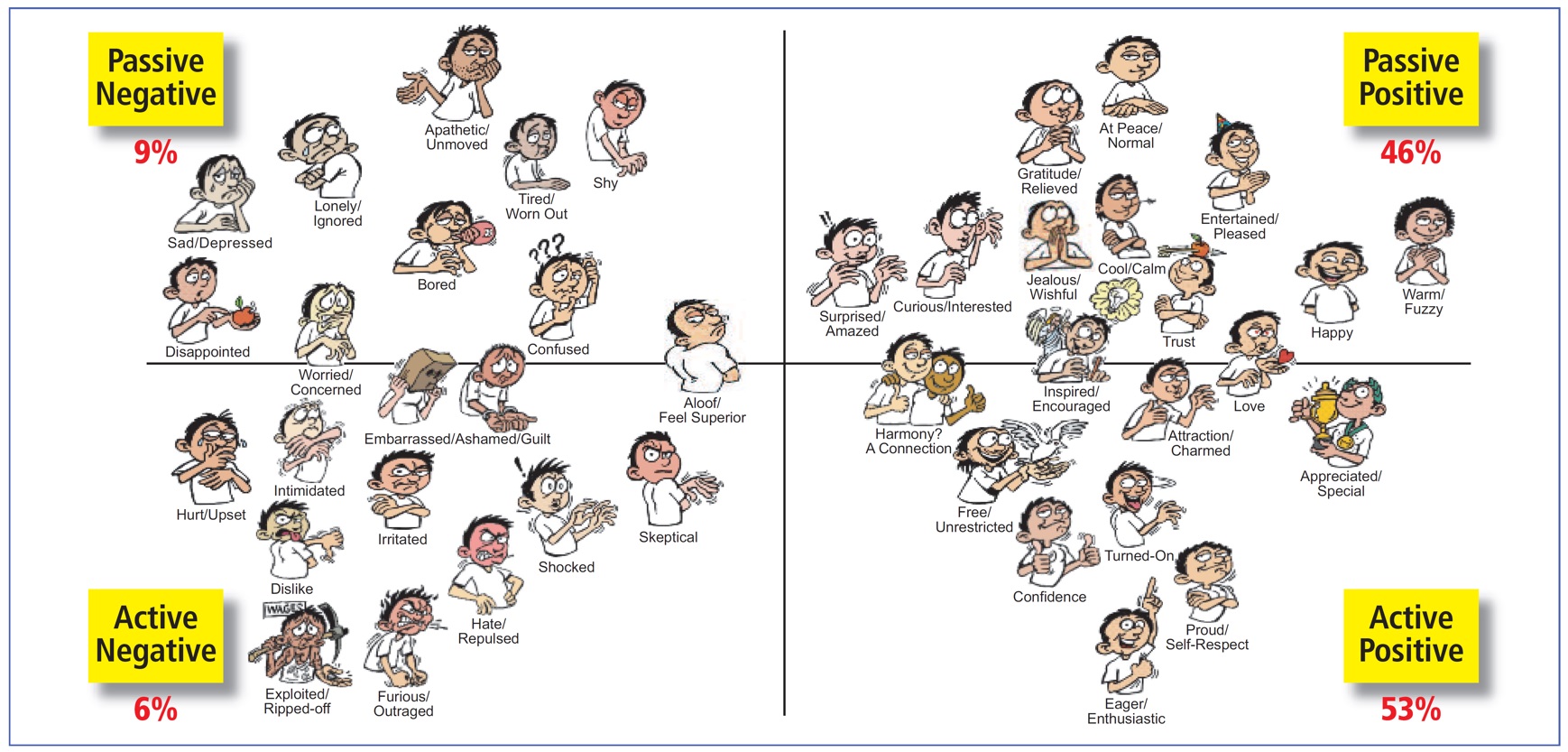

The approach adopted by Ipsos ASI makes use of Emoti*ScapeTM, a show card containing a map of 40 emotions (see Exhibit 13.10), each represented by an illustration of a facial expression (emoticons) and a verbal description of the feeling expressed. Respondents are asked to indicate where on this emotions landscape best represents their feelings for each of the following:

- What feelings do you have from this ad?

- What feelings is the advertiser trying to use and portray within the ad?

- What emotions do you associate with being a brand user?

Their responses are summarized to reflect the emotional reactions and responses to the advertisement and the brand.

There are also a number of physiological methods of testing advertisements that measure the respondents’ involuntary reactions to stimuli. For instance, research on autism at the MIT Media Lab and the University of Cambridge has spawned non-verbal technologies to measure emotional response.

One of these is a wearable, wireless biosensor that measures emotional arousal via skin conductance, also known as galvanic skin response. This technology detects changes in electrodermal activity, which increase during states such as excitement, attention or anxiety and decreases during states such as boredom or relaxation.

Emotional states such as liking and attention can also be revealed from facial expressions, and neuromarketers have developed tools to measure these responses using a webcam. By analysing facial movements and changes in expression, these tools can provide valuable insights into consumers’ reactions to advertising and other stimuli.

This technology is based on the principle that certain facial expressions are associated with specific emotions, such as a smile indicating happiness or a furrowed brow indicating confusion or concern.

Another relevant technology is the electroencephalogram (EEG). Portable, wireless EEG scanners (Exhibit 13.11) enable neuroscientists neuroscientists to gain insights into how the mind responds to stimuli. Sensors covering the surface area of the brain capture synaptic (brain) waves, and amplify and dispatch them to a remote computer. The resulting streams of data reveal participants’ subconscious responses to advertisements, and capture their emotion, attention and memory retention during the course of the commercial.

Exhibit 13.11 Fourier One is a medical-grade, dry and wireless EEG headset developed by Nielsen Neuro (Photo courtesy of the Consumer Neuroscience division of Nielsen).

EEGs are appropriate for capturing signals about attention, arousal, fatigue and surprise, which are emitted from the brain’s surface. They are not as effective in picking up signals from deeper within the brain, that are key for decision making. EEGs therefore are better suited for testing feelings and emotions, and not appropriate for testing informational ads or commercials that require thinking.

The need for controlled location/laboratory environment makes EEGs somewhat restrictive and removed from the natural settings in which advertisements are processed. Even so the use of EEGs is picking up as equipment costs decline.

In recent years, there has been a rapid increase in the development and adoption of non-conventional analytics techniques, including eye tracking, EEG, facial coding, and biosensing. Many organizations, including startups, are building devices that leverage these technologies to measure human behaviour and emotion. Additionally, some organizations like iMotions have developed unified software platforms that enable researchers to integrate these technologies with surveys and other conventional research methods.

Marketers are now using a blend of eye tracking, EEG, facial coding, and biometric, in combination with conventional quantitative and qualitative research studies, to evaluate the effectiveness of advertising and other elements of the mix, including packaging and product usage. Collectively these techniques provide a rich source of insights into consumer behaviours and emotions. While biometrics measures reveal the extremes of emotional engagement, EEG provides for a more nuanced understanding of respondents’ emotions, thoughts and motivations. Facial coding helps to interpret their facial expressions, and eye tracking identifies specific elements of the ad or stimulus that capture consumers’ attention and trigger their responses.

Details about eye tracking, EEG, facial coding and biometric devices are provided Chapter Biometrics.

Previous Next

Use the Search Bar to find content on MarketingMind.

Contact | Privacy Statement | Disclaimer: Opinions and views expressed on www.ashokcharan.com are the author’s personal views, and do not represent the official views of the National University of Singapore (NUS) or the NUS Business School | © Copyright 2013-2026 www.ashokcharan.com. All Rights Reserved.